Categories, PROPs, and control: a compositional language for circuit reasoning

When people first meet category theory, they often meet it as a strange zoo of abstract definitions.

This post takes a different route:

- start from circuits (things you can plug together),

- notice the two ways you compose them (serial + parallel),

- and then explain why categories, PROs, PROPs, and finally controlled PROPs are a very natural ”language layer” for reasoning about circuits by rewriting.

1. What is a category?

A category is the smallest piece of mathematics that can talk about composition in a principled way.

1.1 The definition

A category consists of:

- objects

- morphisms (arrows)

- a composition operation: if and , then

- an identity arrow for every object

subject to two axioms:

- Associativity: whenever both sides make sense.

- Unit laws: and for every .

That’s it.

A useful mental model is:

Objects are ”types of things”, morphisms are ”processes”, and composition is ”do one after the other”.

If you want a friendly motivational video, these ones have the right vibe:

1.2 A picture you can keep in your head

A category is like a directed graph where some paths are declared equal because they represent the same composite process.

flowchart LR

A((A)) -->|f| B((B))

B -->|g| C((C))

A -->|g∘f| C

The whole point is: if you can compose, you can reason modularly.

1.3 Examples (this is where the definition stops being abstract)

Here are some categories you already know:

-

Set objects = sets, morphisms = functions.

-

Vect_k objects = vector spaces over , morphisms = linear maps.

-

Posets as categories Any partially ordered set is a category where:

- objects = elements of

- there is a morphism iff

- composition is forced (and unique when it exists).

These are called thin categories: between any two objects there is at most one arrow.

-

Monoids as categories (the ”one-object trick”) A monoid is exactly the same as a category with:

- one object

- morphisms are elements of

- composition is multiplication, identity is .

This last example is more important than it looks: it’s the first hint that algebraic structure can be encoded as categorical structure.

2. A little history and why anyone invented this

Category theory was introduced by Eilenberg and Mac Lane (1940s) as a way to formalize patterns of construction that kept recurring in topology and algebra.

But its modern ”engineering” motivation is almost embarrassingly simple:

If your subject has composable things, you want a calculus where ”plugging things together” is the primitive operation.

This is why categories show up in:

- programming language semantics (”programs are arrows”),

- logic (”proofs are arrows”),

- circuit theory (”circuits are arrows”),

- and of course quantum information (”processes are arrows”).

3. Categories are not enough for circuits

A plain category only knows how to do sequential composition.

But circuits have two compositions:

- Sequential: plug outputs into inputs (”do this then that”).

- Parallel: place side-by-side on separate wires (”do both independently”).

To model that second one, we need one extra layer of structure.

4. Monoidal categories: ”and also in parallel”

A monoidal category is a category equipped with a tensor product that behaves like ”parallel composition”.

Concretely, it gives you:

- for objects: (”system A together with system B”)

- for morphisms:

and a distinguished unit object (”no system”), plus coherence laws ensuring everything associates nicely.

4.1 Why monoidal categories match circuit diagrams

String diagrams are the reason this is such a good fit:

- wires represent objects,

- boxes represent morphisms,

- vertical stacking is composition,

- horizontal juxtaposition is tensor.

In that world, many ”proofs” are just topological deformations of pictures.

5. PROs: a monoidal category of arities

Now we specialize monoidal categories to something very circuit-like.

A PRO (short for ”PROduct category”, historically) is:

a strict monoidal category whose objects are the natural numbers , with tensor on objects given by addition:

Interpretation: object is ” wires”.

A morphism is ”a box with input wires and output wires”.

5.1 What PROs give you (and what they don’t)

PROs capture planar wiring: you can compose and put side-by-side, but you do not automatically get wire swaps.

This is already enough to encode a lot of algebra.

5.2 Example: the PRO for monoids

A (not-necessarily-commutative) monoid has two operations:

- multiplication

- unit

with equations:

- associativity:

- unitality:

In a PRO, these equations are literally equations between diagrams.

This is one of the deep ideas behind PROs/PROPs:

They let you treat an algebraic theory as a diagrammatic rewriting system.

6. PROPs: PROs + permutations = real circuit wiring

Most circuit languages allow you to swap wires.

Categorically, this is symmetry.

A PROP (”PROduct and Permutation category”) is a PRO equipped with:

- for every , a symmetry isomorphism

- satisfying the usual coherence laws (so permutations behave like permutations)

Equivalently:

A PROP is a strict symmetric monoidal category whose objects are natural numbers.

6.1 Why quantum circuits fit in PROPs so naturally

In a circuit model:

- object means ” qubits” (or qudits, or wires in general)

- morphisms are unitary circuits on wires

- tensor is ”put circuits side-by-side”

- symmetry is SWAP / wire permutation

So, at the level of pure syntax + wiring, a quantum circuit language is basically a presented PROP.

This is exactly what you want when building rewrite engines: you don’t want every rule to keep re-proving ”SWAP behaves like a swap”.

7. Presentations: ”a circuit language = generators + relations”

If you’ve seen group presentations (”generators and relations”), this is the same idea, one categorical level up.

A presented PROP consists of:

- a set of generating gates (morphisms of various arities)

- a set of equations (relations) between diagrams

- and then you take the free PROP generated by those gates modulo those equations

This is not just philosophy. It is literally how many ”complete rulebooks” are stated:

- choose generators,

- list rewrite rules,

- prove they are sound and (ideally) complete.

8. The idea of ”control” as structure

Now we come to the central theme that motivates controlled PROPs.

In circuits, control is not a single gate: it’s a pattern.

For qubits, controlling gives CNOT. Controlling a whole subcircuit gives a controlled subcircuit.

So control is naturally an operation on circuits, not just another circuit.

8.1 The pain point (why this matters for rewriting)

If control is treated only as a derived gadget, then a complete theory often needs rules that mention arbitrarily many control wires, because ”multi-control” is unbounded.

A different design choice is:

Treat control as a primitive constructor with its own coherence laws.

Then you get a powerful meta-rule:

If , then their controlled versions are equal automatically.

That single principle is a massive compression of a rewrite theory.

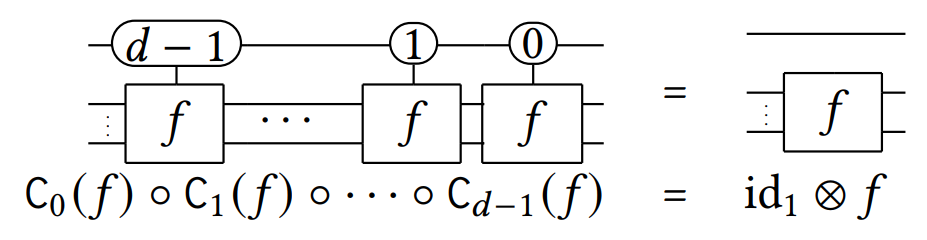

9. Controlled PROPs (Delorme–Perdrix): control as a functor

One clean formalization is due to Delorme and Perdrix.

9.1 The core definition

Let be a PROP, and let denote the subcategory of endomorphisms (i.e. morphisms ).

A control functor is a functor

that:

- sends each object to (adds one control wire),

- sends each endomorphism to a controlled version ,

- and satisfies a small set of coherence laws saying that nested controls commute appropriately and that control interacts well with the PROP’s symmetries.

In other words:

A controlled PROP is a PROP equipped with such a control functor.

9.2 What this buys you immediately

The defining property of a functor is that it preserves:

- composition:

- identities:

So ”control distributes over doing things in sequence” is built in for free.

And because this is a functor on the equational theory:

if your rewrite rules prove , you automatically get .

That is exactly the kind of ”lifting” you want in a rewrite engine.

9.3 What does control mean semantically?

In finite-dimensional Hilbert spaces (the usual quantum model), the standard qubit control-on- operation is:

For qudits of dimension , you can control on any basis value :

This is the clean ”block-diagonal” picture: apply on the target only in the control subspace you selected.

9.4 Points: ”true” and ”false” for control

A pleasant extra feature is the notion of points for a control functor: two special states (diagrammatically: morphisms) that:

- annihilate the controlled operation (”false”)

- fire the controlled operation (”true”)

This captures, abstractly, the idea that plugging into the control wire makes the controlled circuit behave like identity, while plugging makes it behave like .

10. Polycontrolled PROPs: control comes in families

For qubits there are already multiple reasonable ”control choices” (control on , on , on , etc.), related by conjugating the control wire.

For qudits, something even more canonical happens:

There are natural basis controls: control-on-, …, control-on-.

So one is led to a PROP equipped with a family of control functors:

Once you have multiple control functors, you can ask structural questions:

- do they commute (in some sense)?

- are they exhaustive (do they ”cover” all basis cases)?

- how do they relate by conjugation?

These are not ”quantum-specific” questions; they’re about the wiring logic of control.

11. Controlled PROPs (Heunen–Kaarsgaard–Lemonnier): eight equations for computational control

A complementary approach comes from Heunen, Kaarsgaard, and Lemonnier, who isolate control as a tiny equational theory.

11.1 Start from a ”crop” (a prop of endomorphisms + a NOT)

They introduce a controllable prop (they abbreviate ”crop”):

- a prop whose morphisms are endomorphisms ,

- equipped with a distinguished involution (think NOT), with .

From this base, they freely adjoin controlled versions of morphisms.

11.2 Two controls (positive and negative)

They use two endofunctors:

- : ”control on 1” (positive control)

- : ”control on 0” (negative control)

and show you only need a small, interpretable list of equations—eight of them—to govern how control behaves.

11.3 What the equations mean (informal reading)

The eight equations include:

- functoriality: controlled composition and controlled identity behave as expected

- strength: control ignores extra unused wires (it behaves well under adding identities)

- colour change: positive and negative control relate by conjugating with NOT

- complementarity: doing both controls amounts to ”always do ”

- commutativity: controls of opposite polarity commute appropriately

- swap / swap coherence: nested controls interact properly with wire swapping

One way to read their result is:

Control is not ”a million different controlled gates”. It’s a small structural theory you can add on top of any base circuit theory.

They also connect this syntactic construction to a semantic universal property: the controlled theory corresponds to a free kind of ”rig-like” categorical structure.

12. Why this matters for rewriting and completeness

At this point you can see the strategic advantage:

-

A PROP presentation of a circuit language separates:

- wiring bureaucracy (structural laws)

- fragment-specific gate identities

-

A controlled PROP adds one more structural layer:

- ”control is a constructor” with coherence laws

And this changes the shape of rewrite systems you can hope for.

The slogan becomes:

Make control structural, and local rewrite systems become possible.

References and further reading

-

Delorme & Perdrix, Diagrammatic Reasoning with Control as a Constructor, Applications to Quantum Circuits: https://arxiv.org/abs/2508.21756

-

Heunen, Kaarsgaard & Lemonnier, One rig to control them all: https://arxiv.org/abs/2510.05032

-

My paper (the qudit completeness result framed via polycontrolled PROPs): https://arxiv.org/abs/2602.09873

-

For a classic textbook introduction:

- S. Mac Lane, Categories for the Working Mathematician.

-

For string diagrams and monoidal categories (highly recommended once you’re comfortable):

- A. Joyal & R. Street, work on string diagrams (many expositions exist).

- P. Selinger, survey material on dagger compact categories and quantum processes.

Comments